AI content is generic because the process is broken

You scroll through LinkedIn and see article after article with the same predictable opening lines. “In today’s rapidly evolving landscape…” “Let’s delve into…” It’s AI slop: content so generic it hurts to read. It’s flooding the internet, technically correct text without personality, without depth and without unique insights.

The irony is that everyone uses AI for content, but nobody’s truly satisfied. The models are powerful enough; GPT-4, Claude and Gemini can produce impressive text, but the problem is how we use them. Most people open ChatGPT, type a vague prompt and hope for the best. If they’re not satisfied, they click “regenerate” and try again. That process has three fundamental problems:

Too little context

The AI gets no tone of voice, no brand identity and no audience information. Just a title or a vague prompt. How can the AI create something unique without context?

No expert validation

The people who truly know the subject aren't involved in the process. Feedback happens after the fact and ad-hoc: 'this is wrong' without explaining why. Subject matter experts see the draft and have to rewrite everything because the AI misses crucial nuances.

Regenerating isn't iterating

Every time you click 'regenerate', the AI throws everything away and starts over. Good parts that were correct get lost and you don't learn from previous versions. It's content roulette: keep spinning until you get lucky.

The problem isn’t the AI but the process. The same models produce better content when you give them context, structure and iterative feedback.

We thought: what if we fundamentally rethink the process? Not improving the AI, but changing how people work with it. So we built unwrite.it, an experiment to test whether a structured workflow actually makes AI content better.

Structured input, feedback and read-only editing

unwrite.it combines three phases, each addressing a problem of the standard workflow:

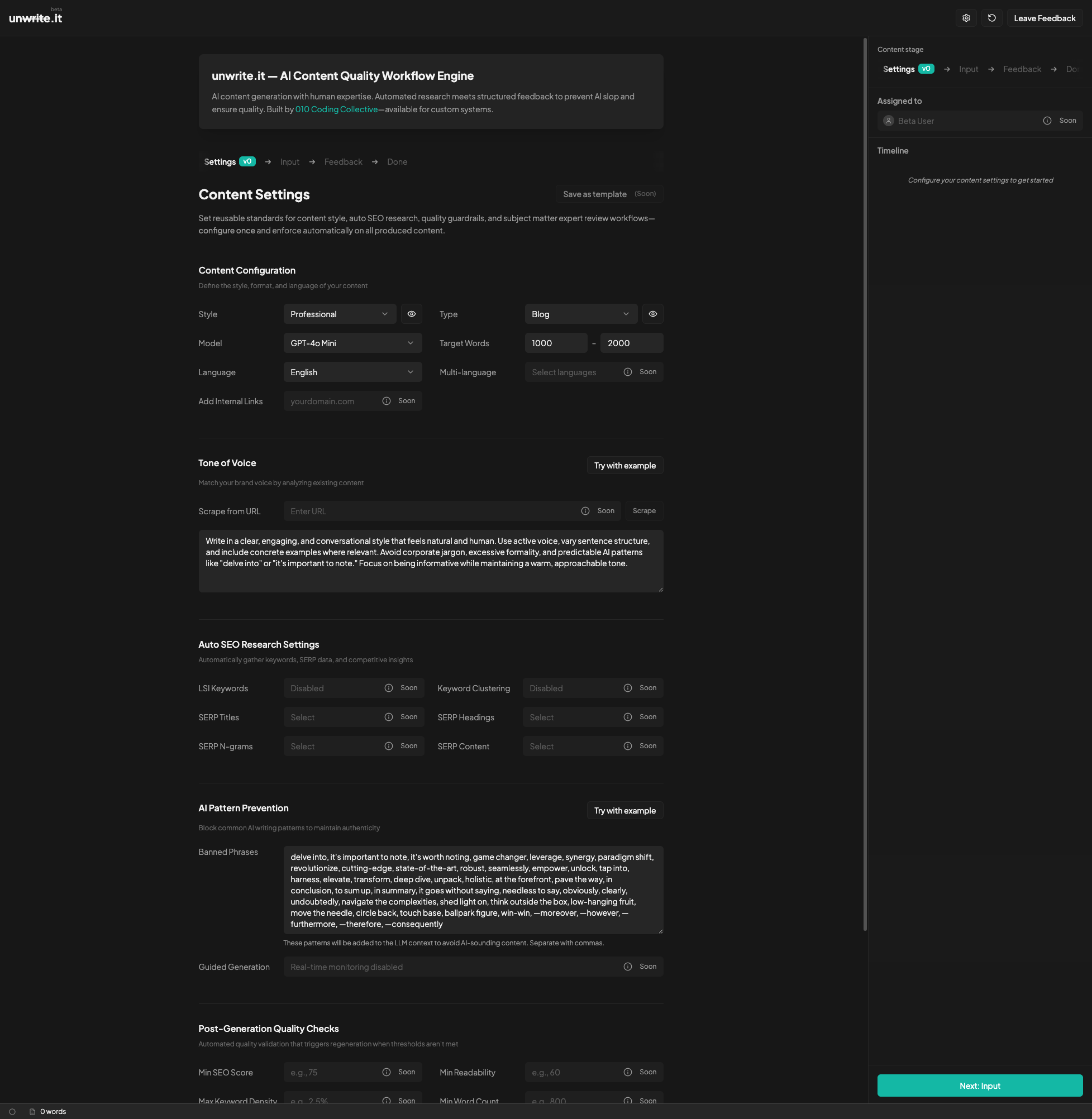

Settings: set your standards

Configure your tone of voice once (describe your writing style or paste examples), content type, model selection and desired word count. Crucially: a list of forbidden phrases the AI must avoid. By explicitly saying 'don't use these phrases' you force the model to be more original. No 'delve into', no 'it's important to note', no 'revolutionize'.

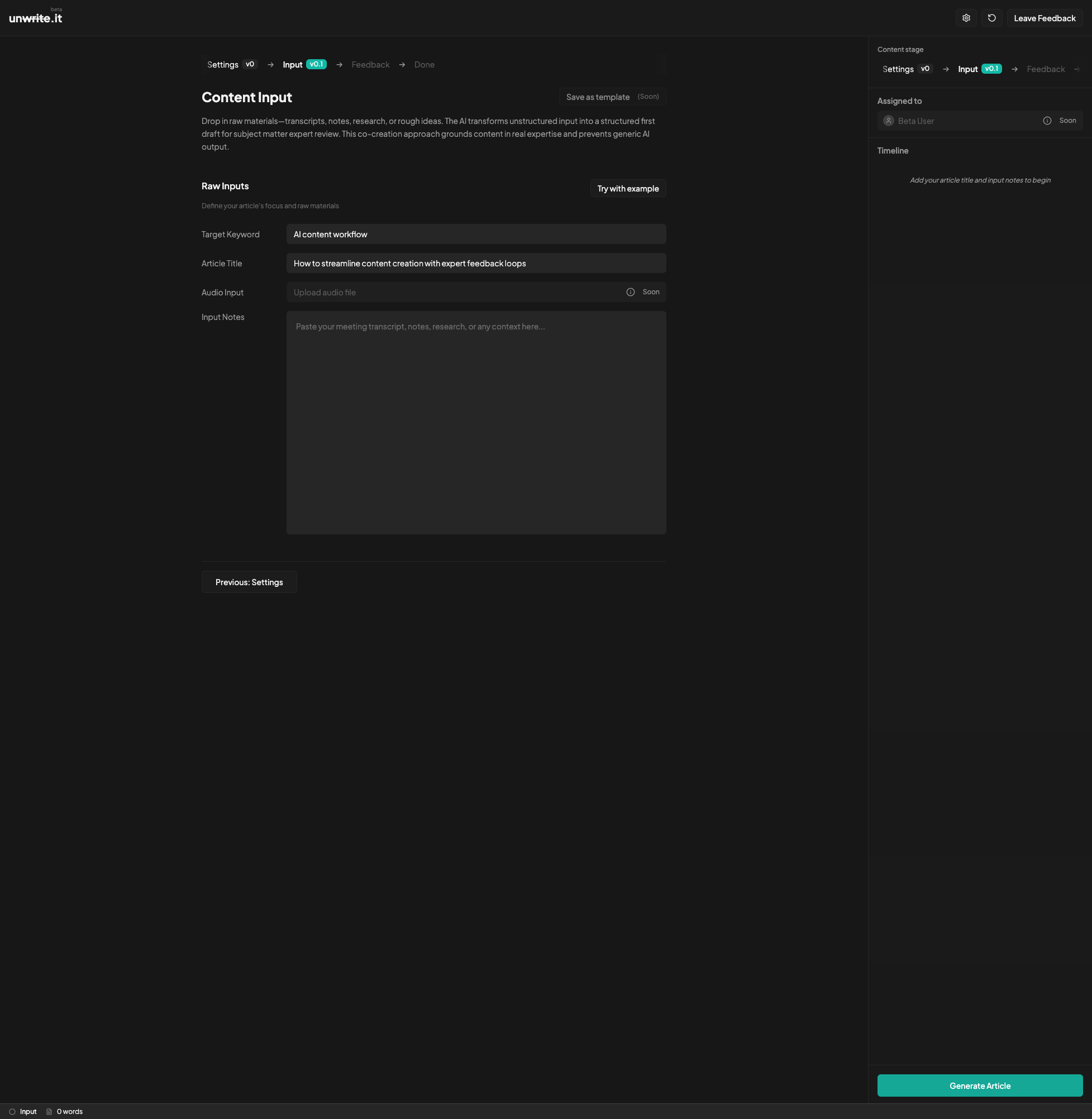

Input: dump your raw material

Article title, keyword and especially your input notes: meeting transcripts, interview notes, bullet points and raw ideas. You don't have to clean it up, the AI will organize it. This is when you give the AI the context it needs.

Feedback: refine via comments

The editor is read-only, you can't type. You select text and add a comment: 'This is wrong because X' or 'Missing nuance about Y'. The AI reads all comments, understands the context, makes targeted adjustments and creates a new version. Repeat until satisfied, with full version history.

The read-only editor is the most controversial choice, but also the most powerful. If you can just type, you fix things ad-hoc: “oh this word fits better”, type, done. The AI learns nothing and the next article has the same problems. But if you have to write comments, you have to articulate what’s wrong and why. “This sentence is too formal for our tone” is more valuable than simply changing the word, because the AI can recognize that pattern and apply it to the entire article and to all future articles.

Read-only feels frustrating at first. “Why can’t I just change this word?!” But it forces you to think: why do you want to change it? That context is more valuable than the change itself.

The process matters more than the model

The most important discovery: GPT-4 with a good workflow performs better than GPT-4o with vague prompts. Not because the model changes, but because the process is better. The model gets better instructions, more context and structured feedback, which makes the output consistently improve.

The experiment also confirmed that constraints produce better feedback. The read-only editor irritates people, but it works. When you can’t quickly adjust something, you have to think about what exactly is wrong, why it’s wrong and what should be different. That feedback is ten times more valuable than “fix this word” and forces you to identify the underlying problem.

We also found that text arriving in real-time during generation psychologically feels much faster than waiting for a block of text, and that version history was indispensable: being able to see what v1, v2 and v3 were provides insight into which feedback is most effective.

Let’s be clear: unwrite.it is a working prototype, not a product. It has structured feedback, read-only editing, version control, streaming generation and forbidden phrase enforcement. What it doesn’t have: collaboration features, team workflows, CMS integrations or cross-device synchronization. We deliberately chose a fully client-side app running in the browser with your own OpenAI API key: quickly built, privacy by default and no server issues. Enough to validate whether the approach works, and the answer after a few weeks of testing was yes.

Conclusion: fix the process, don’t wait for GPT-10

AI slop doesn’t come from bad AI but from bad process design. The standard workflow (prompt, generate, publish or regenerate) is too simple: it lacks structured input, context, expert validation and iterative refinement.

The difference is in the workflow

The future isn’t “AI replaces people” but AI doing the heavy lifting while people provide expertise and judgment. For content, that means: AI writes and structures, people validate and refine through a structured feedback loop. Domain experts don’t need to be writers, they only validate the content. Writers don’t need to be experts, they orchestrate the process.

Prompt and hope

Vague prompt, no context, no expert validation. Regenerate until it's 'good enough'. Generic output without depth or personality.

Structured feedback

Context upfront, iterative feedback from experts and constraints that enforce originality. Output that improves with every iteration.

If we want to make AI content better, we need to fix the process. unwrite.it proves it’s possible, right now, with the models we have today.

Try it yourself

unwrite.it is a working prototype showing that workflow matters more than the model. Curious how structured feedback improves AI content?